Computer processors are getting faster and faster because of a law called Moore’s law. This law says that the number of transistors on a chip doubles every two years. However, there are natural limits to how small transistors can get, and eventually we will reach those limits. When that happens, we will need to rely on quantum computing, which is a type of computing that uses the principles of quantum mechanics.

It seems like everything that we do these days is in some way linked to computers. Our financial systems, social connections, communication networks, entertainment…. our digital lives are rather incredible, right? Particularly since computers are a relatively “new” thing.

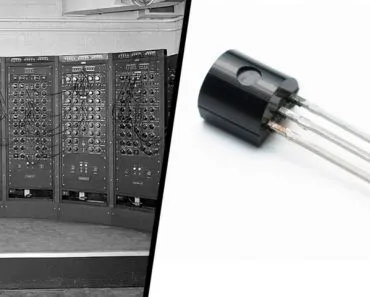

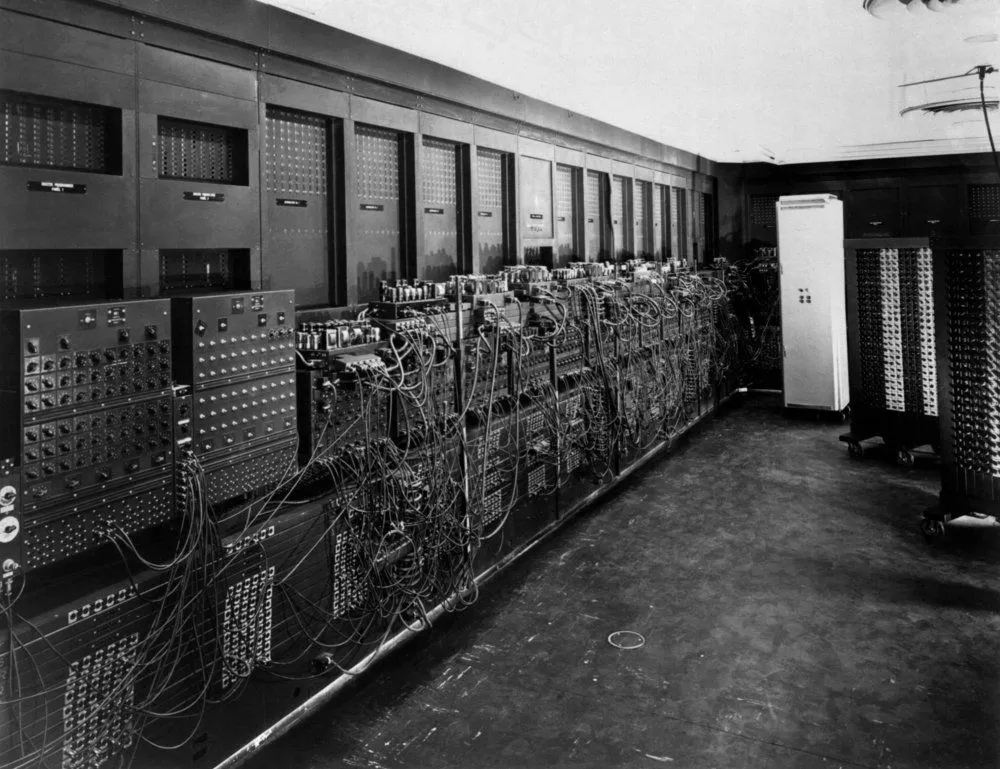

The first computer was made in 1946, and that was about as big as a house. It was called ENIAC, seen in the picture below, and was thousands of times slower than the worst dial-up connection to the Internet you can imagine!

Think about how far we have come since then? We can compute millions of calculations in mere seconds, speak to and see people across the world instantaneously, and access any piece of information known to mankind with the swipe of a finger.

It sometimes seems like there is nowhere left to go! It seems like computer companies continue improving on every product they’ve developed, both in terms of functionality and speed, but is there a limit to our progress? Can Computers Keep Getting Faster Forever?

Recommended Video for you:

Moore’s Law Will Sort This Out…

The speed of computers is fundamentally linked to the microchips they use, and more specifically, to the number of transistors on those microchips.

Back in the mid-1960’s, the founder of Intel, one of the largest multinational technology companies in the world, made a bold declaration about the speed of computers. He claimed that the speed and power of computers could be doubled every two years. At the time, this wasn’t believed, given how astronomically fast computers would get, but over the past four decades, that is the rate of increase we’ve observed in our microchips.

Constant advancements have allowed roughly twice as many transistors to be placed on chips every two years. It was an amazing prediction, and has since become known as Moore’s Law. Unfortunately, there are natural limits to Moore’s Law, which we are beginning to see evidenced in the world today!

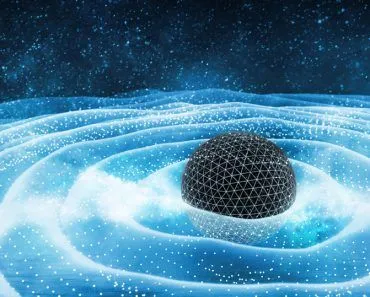

We are already making transistors that are only a few atoms in size, which is incredibly small, but what happens when we breach that ultimate boundary of the atom? Well, we’ve already considered the possibility, which is why quantum computing is now an important area of study.

Based on similar principles as quantum mechanics, it is postulated that quantum computing would basically increase the speed and processing power of computers by relying on the uncertainty of the quantum state. This could allow for far greater calculations in computers, perhaps trillions more calculation per second that our current technology!

A calculation has been made that estimates a “perfect” quantum computer, and while the math is rather advanced, the results say that a perfectly efficient quantum computer could calculate ten quadrillion per unit of energy more than our fastest processors yet developed.

This would be a world where Moore’s Law almost never ends. This approach is criticized by some, who make a very different point, one that is closely linked to another hot-button topic in the tech community – artificial intelligence!

Can Robots Build Better Computers Than Humans?

The other predominant theory is that when we reach a certain level of manmade technology, we will essentially have created enough computing power and capacity to emulate the human brain – also known as creating a consciousness. Artificial intelligence is the more popular term for this, and while this is an exciting idea, it is also frightening in some ways.

If we create an artificial form of intelligence that can continue designing and innovating computers far past the level humans were able, then if Moore’s Law doesn’t break down, humanity could be in jeopardy, and our natural intelligence will be quickly surpassed by the computers, robots, and machines that we’ve imbued with a “consciousness”.

This first-generation robot computer would essentially create a computer twice as intelligent as the human brain – and who knows where that could lead? Two years after that? What about ten years later? Human beings might be completely unnecessary by that time, replaced by a far superior intelligence.

In other words, human beings have a limit when it comes to Moore’s Law (as of now), but maybe our artificially intelligent computers won’t. You’ve all seen the Terminator movies, right? Plenty of theoreticians have proposed what could happen if an artificial intelligence program were to access the Internet. Robotic takeover, the elimination of humanity, launching of nuclear weapons… the Hollywood effects go on and on.

However, it isn’t just Hollywood worrying about this. Elon Musk, the founder of Paypal, Tesla Motors, and Space X, and a personal hero to millions around the globe, has also warned about the dangers of artificial intelligence resulting from increasingly fast processing power and computer advancements. He likened it to opening Pandora’s Box, as we have no realistic way of predicting what it could mean for our species and our future!

Are These Our Only Options?

It sounds a bit bleak when we look at it from those two perspectives, but are those really the only outcomes for our future?

Fortunately, no!

Recently, researchers have made impressive advances with graphene, which has incredible applications in many different industries. You can read more about the remarkable properties of graphene here. At IBM, one of the most cutting-edge companies in the world of tech, they have created the most advanced graphene-based chip, capable of process speeds that are 10,000 times what current graphene technology has been able to achieve. In a field where smaller and faster are the foundations of success, graphene may be the next big thing!

By applying a thin layer of graphene as the final step in the microchip development process, engineers have been able to prevent the speed slowdowns as a result of graphene’s fickle, single-atom-thick nature (the problem in other graphene chip designs). While this unique physical property (single-atom sheet) of graphene is the reason that electrons (and subsequently information) can move quickly, it also makes graphene difficult to work with. Fortunately, IBM has managed to take the first steps towards fully utilizing graphene’s capacity.

This would extend the limits of Moore’s Law considerably, allowing us to use this one-atom-thick material as a crucial component in transistors and microchips that are light-years ahead of what we have in place now.

The Near Future… Or What’s Left Of It!

According to Moore’s Law, and the limits of quantum mechanics, some estimate that we will reach top processing power in roughly 70 years. Critics of that claim, however, say that Moore’s Law will begin to break down in as little as 15 years, particularly because transistors are already microscopically small.

Regardless of how long it takes for us to reach the ultimate speed of computers, I don’t really mind, as long as I can keep streaming every football game on my smartphone! Who’s with me?

References (click to expand)

- Moore's law - Wikipedia. Wikipedia

- The end of Moore's Law? Why the theory that computer .... The Independent

- Waldrop, M. M. (2016, February). The chips are down for Moore’s law. Nature. Springer Science and Business Media LLC.

- The end of Moore's Law: Are we facing the creation or the apocalypse? | Michigan Engineering - www.engin.umich.edu