Table of Contents (click to expand)

Different countries have different standard voltages because of the Edison-Tesla rivalry. Edison wanted to use DC power, while Tesla proposed AC power. AC power won out in the end, but the standard voltage and frequency were different in different parts of the world. Attempts to standardize the voltage and frequency have been unsuccessful.

The electricity that we use in our homes, offices, hotels and everywhere else offers convenience that we generally overlook—until it turns into an inconvenience, of course. Like, for example, if you’re a globe trotter, you must have realized that it’s a huge hassle to keep a medley of adapters, converters, and transformers just to make sure you can extract juice from the variety of plugs abroad!

The more you look into these different types of plugs, the sillier it all appears. If you bought a charger from a store in Washington, you won’t be able to use it when you land in Paris. And if you buy another one from an airport in Paris and fly off the next day to London, it once again becomes unusable in London! That’s a hassle for plugs, but the bigger hassle is about the voltage, as well as frequency, to some extent. Even if you get a compatible plug, but the supplied voltage and frequency is different, then it becomes risky. Worst of all would be blowing out your adapter and the connected device.

So why do we have different levels of supplied voltage and frequency in different places? Well, to understand this discrepancy, we need to brush up on the physics of electricity and then take a deep dive into history to unravel the mystery of miscellaneous supplied voltages across the globe!

Recommended Video for you:

Basics Of Electricity

The electricity that powers our homes basically comes in two types: DC (direct current) and AC (alternating current). Direct current is a unidirectional stable current, while alternating current is a bidirectional current that changes its direction and intensity periodically. How frequently this change happens is represented by frequency. The difference between the two is explained very well in this article.

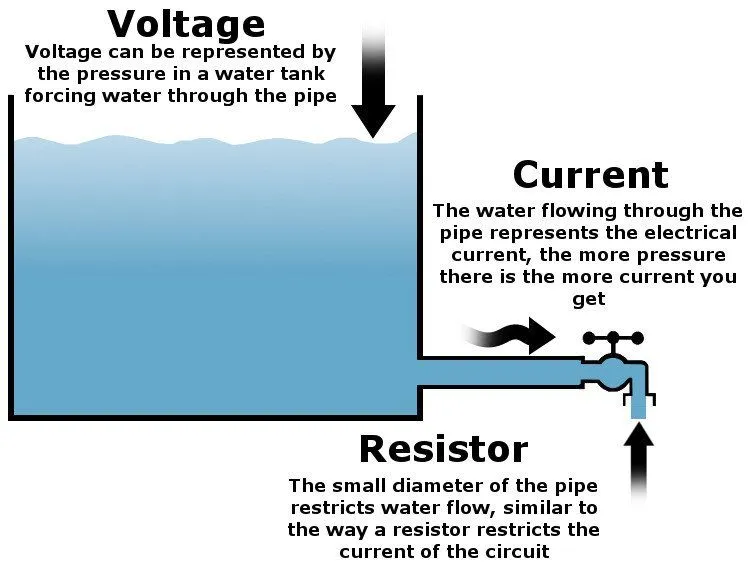

Voltage (measured in volts) is the measure of the potential difference between two points in an electrical field. To put this in perspective, consider a stream of water coming from a water tank. The amount of water coming out is the electric charge, the water flow is the electric current, and the pressure at which the water flows represents voltage.

To make any electronic device (say, a laptop) work, you need to provide it with an electric current. Electric current represents the rate of flow of electrons through a conductor. So, whenever you plug your appliance into a wall socket and turn it on, a potential difference is established between the wall socket and the laptop charger, so there is a flow of current (read as electricity) that powers the connected appliance.

Now, this value of the supplied voltage is typically in the hundreds, but varies with countries. For instance, in the United States, the standard voltage is 120 volts (at 60 hertz), whereas in India, it’s around 230 volts (at 50 hertz); other countries may also have a different operating voltage. This brings us to the obvious question: why this is so? The answer lies in the history of electricity and the aftermath of its discovery.

How The Edison-Tesla Rivalry Led To The Disparate Supply Voltage Value

Thomas Edison invented the light bulb at the end of the nineteenth century. Being a visionary businessman, he dreamt of electrifying New York City with his new invention. His idea was to put metal wires on poles in the street of the city that would carry direct current (DC) to houses. However, transporting DC over a long distance isn’t practically feasible, given that a high amount of energy loss occurs in the wires on account of their internal resistance.

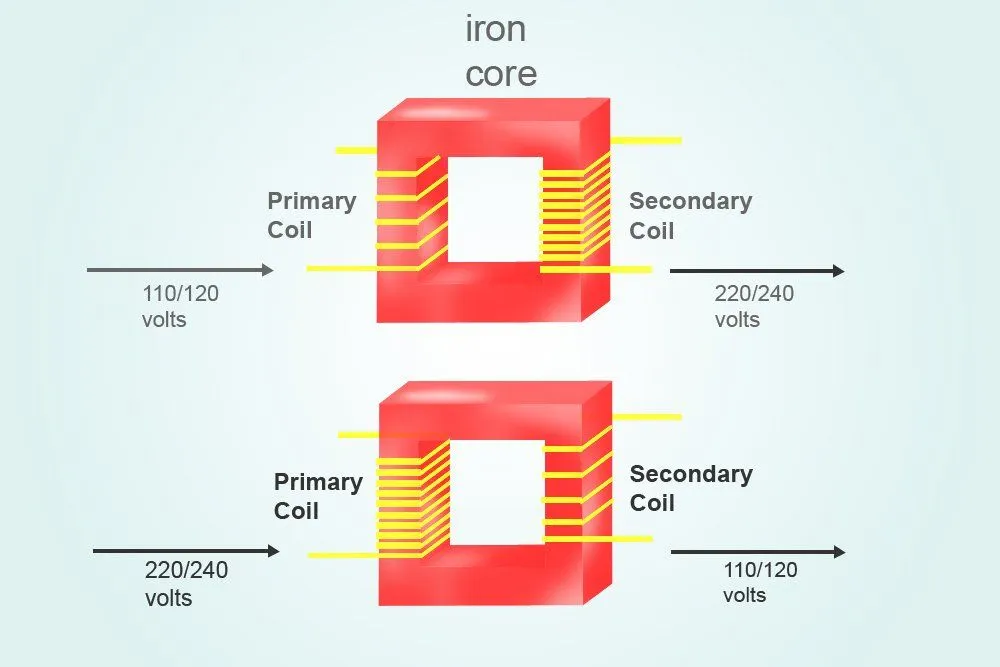

Nikolas Tesla, another great inventor of that era, proposed the idea of alternating current (AC) as a method to electrify homes. The hurdles faced by DC could have been overcome using AC, which relied on another great invention called transformers. Transformers help in easily scaling up or down the voltage with a negligible power loss. You can learn more about transformers here.

Tesla was an intelligent engineer and astutely posited that the best frequency for AC transmission would be 60 hertz and the optimal voltage would be 240 volts. However, as Edison’s systems were built around 110 volts at that time, there was the struggle between the two scientists’ conception of which voltage should be adopted.

Edison tried his best to lobby for DC and made all possible attempts to convey that the “240 volts” AC suggested by Tesla was too dangerous to be used in the household. To prove his point, he invited people to a place where Tesla’s high-voltage system was installed. He would electrocute pet animals using the system. Edison also happened to be the inventor of the motion picture camera. He recorded the electrocution of pet animals on his camera. One of those recordings of electrocution is still preserved on the Internet. It is of an elephant by the name Topsy; Edison electrocuted the majestic beast to convince people that high voltage AC was very dangerous:

Although Edison couldn’t really establish DC as the household supply of electricity, he was successful in convincing the statesman at that time that “240 volts” AC current was too dangerous. New York back then adopted 110 volts AC, a voltage range in which Edison’s system used to generally work to minimize the perils of high-voltage electricity killing humans. Although it was safer to use low voltage AC, it led to more power loss than the systems using 240-volt AC.

Tesla’s design for AC power transmission was first implemented for commercial use by Westinghouse Electric in the US. They adopted 60 Hz frequency with 110 volts for AC current transmission.

In Europe, on the other hand, people were not that picky about the perils of high-voltage AC. They mostly chose between 220-240 volts AC for transmission. BEW was one of the early companies in Europe to get into the AC transmission business, and went for 240 volts. Oddly, they chose 50 hertz instead of 60 hertz. This 50 hertz AC with voltage between 220-240 volts soon started to be slowly accepted across the continent.

Colonial powers like Britain, France, Spain, Portugal, etc. began setting up electrical systems in their colonies in Asia and Africa based on the voltage and frequency they used in their own countries.

Attempts To Standardize Voltage

Now, the question arises… didn’t we ever attempt to standardize the voltage and frequency for electricity transmission? Well, the International Electrotechnical Commission (IEC) was one of the first organizations to attempt to standardize the voltage and electrical outlet. They collaborated with Holland’s International Questions Commission in 1934 and formed a committee to figure out a solution to the problem of different socket designs and varied electricity parameters like voltage and frequency. Many fascist-controlled nations emerged in the aftermath of World War 1 in Europe. It was an uphill task to convince the dictators of those nations to adopt a common standard.

Unfortunately, just when the committee was starting to make some progress in this direction, World War 2 erupted, ceasing all their efforts to standardize. This effort was then effectively rescinded in the 1950s, with IEC concluding that even in a limited geographical region of Europe, it was very difficult to agree on adopting a single standard, let alone achieving it across the rest of the world.

Also, IEC doesn’t really have a mandate to persuade nations to follow a common standard. They are like the United Nations General Assembly for electronic standards, which means that they can put forward a standard, but don’t quite have the power to direct nations to follow it. Also, as time has passed, humanity progressed with their respective electrical transmission type and design; the quantum of these installed systems became too large to be redesigned and reconfigured again. Everyone had invested in their own system for decades, making it economically inviable to adopt a new standard by redesigning the whole electrical transmission setup from the origin point of generation all the way to distribution.

A Final Word

So, to summarize, countries in North America adopted 110-120 volt AC to minimize the risk of injuries and fatality, but did so at the cost of forgoing power efficiency. Also, they adopted the 60 hertz frequency, which is optimal for AC transmission. European countries, on the other hand, went for 220-240 volt AC to minimize electricity losses, but debatably erred by choosing the 50-hertz frequency. Systems working on 50-hertz frequency are prone to flickering.

Also, as European countries like Britain, France, Germany, Spain and Portugal had colonies in Asia and Africa, they installed electrical transmission systems using the voltage and frequency rating that they used in their own countries. This is why we have variations in voltage, frequency and electrical power outlets across the globe, and it doesn’t look like standardization will ever be achieved.