Table of Contents (click to expand)

The ground-breaking observation of the Intel Founder, Gordon Moore, paved the way for tremendous growth in the field of Nano-Technology and Nano-Science, and his words later came to be accepted as a law.

Humans have always been fascinated by the largest of scales, like that of the cosmic world, to the very smallest of scales of the recently discovered nano-world.

The unquenchable thirst of curious minds has magnified humankind’s vision to the numerous opportunities that things at the nanoscale have to offer. Nanoscience is one such sub-branch of physics that explores these possibilities. Nanoscience deals with the structures and molecules at the nanoscale. Nano-technology, on the other hand, refers to the practical applications of Nanoscience.

Nanoscience and nanotechnology are often used as synonyms for the other. The scientific activities occurring at less than 100 nanometers of dimension fall under this sub-branch.

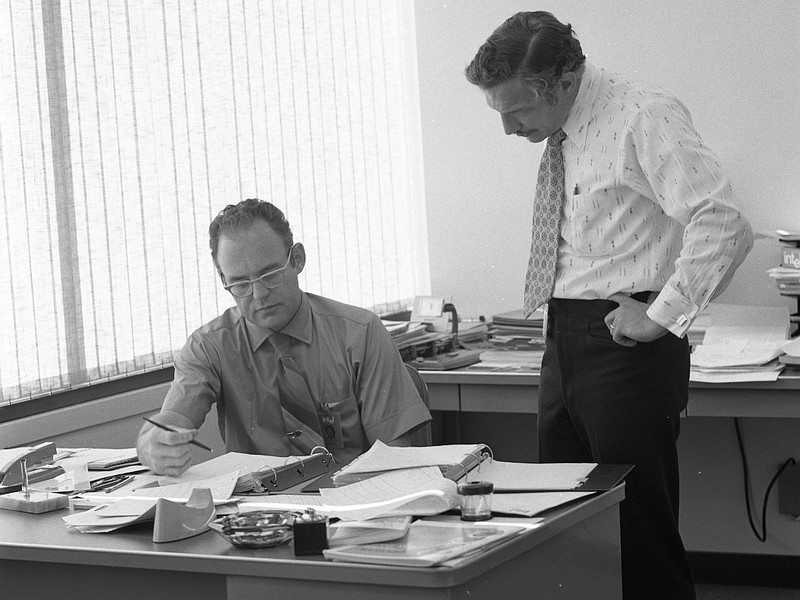

There are a few visionary scientists who have been able to make future predictions in this field quite accurately. One of them is Gordon E. Moore, the co-founder of the microprocessor manufacturing giant, Intel.

Recommended Video for you:

What Is Gordon Moore’s Statement?

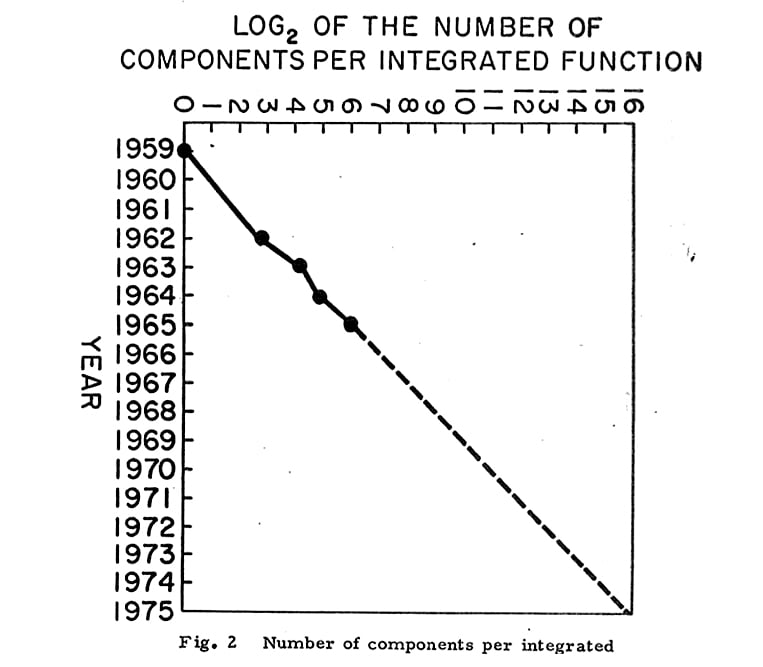

On April 19th, 1965, Gordon Moore published an article in Electronics Magazine referring to his observation that the number of transistors in an Integrated Circuit (IC) will double approximately every two years. He made this observation while working at a company named Fairchild Semiconductor.

This statement also implied that the power of the computers would double every two years, as more transistors could occupy a designated space of electronic circuitry. The machines that work on computer technologies would become smaller, faster, and cheaper over time. It also meant that new innovative technology would experience tremendous growth. This observation also implied that the growth of microprocessors would be exponential over time.

The relevance of Gordon Moore’s statement to the semiconductor, computing, and electronic industries became the driving force behind the technological revolution in Intel and elsewhere.

Accepting His Statement As A Law

It is interesting to note that Moore’s Law isn’t a law in the actual sense, since it has no written proof, but its immense popularity and accuracy led to general acceptance of it as a ‘law’.

The term ‘Moore’s Law’ was coined by Professor Carver Mead of the California Institute of Technology around 1975.

Over time, many other observations were made and amendments were tacked on to Moore’s law to better reflect the current trends, namely that the doubling interval was changed from 2 years to 18 months.

Benefits Of Moore’s Law

As mentioned earlier, Moore’s Law was revolutionary in the semiconductor, computing, and electronics industries. It impacted the progress of computing power, making it faster, cheaper, and easier.

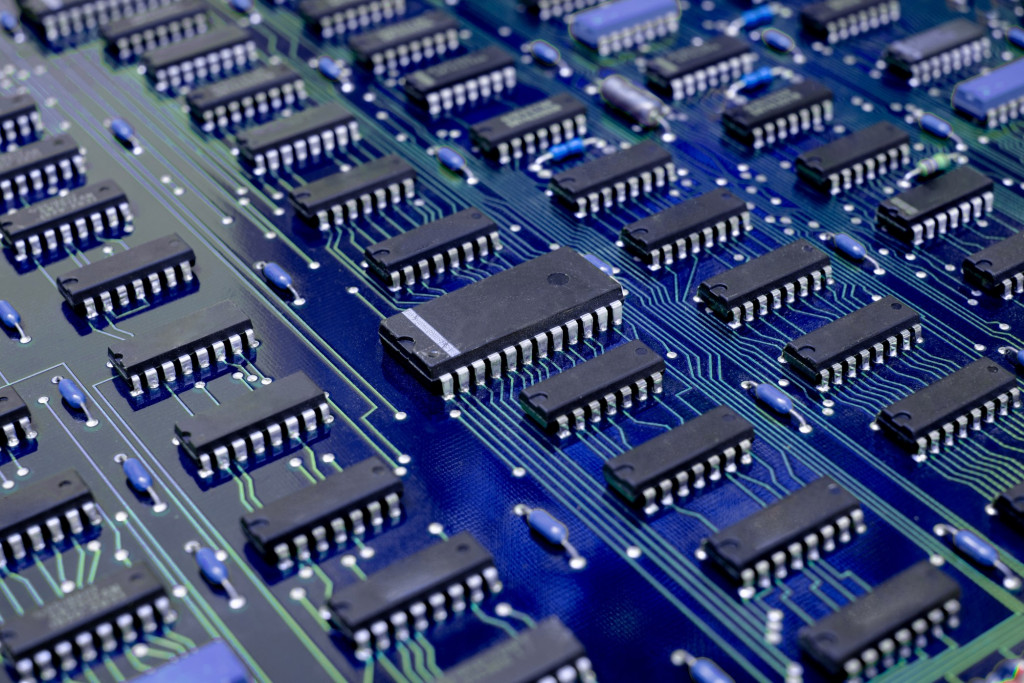

Practically everything around us is composed of more and more electronic components. These electronic components are based on the principles of computing and microprocessors. With a higher number of transistors in a given unit of space, integrated circuits can process electrical signals faster, thus increasing their efficiency. We are now able to easily process gigabytes of data in the blink of an eye!

One striking feature of this law is that it proposed that the cost of electronic components, especially semiconductors, integrated circuits, and computers, would gradually become cheaper. This has actually happened, making the technology available to the common crowd. From $90,000 laptops down to an affordable $800 smartphone performing similar tasks, technology sure has come a long way.

Future Predictions

Though Moore’s Law has ruled the semiconductor and electronics industries for a long time, scientists and researchers have agreed that the time is approaching when computers should reach the physical limits of Moore’s Law.

Thermal constraints like the high temperature of transistors fabricated into an IC would eventually make it impossible to create smaller circuits, because it would take more energy to cool down a transistor than the amount of energy already passing through it.

Also, new materials beyond silicon chips acting as microprocessors and IC components may nullify this law.

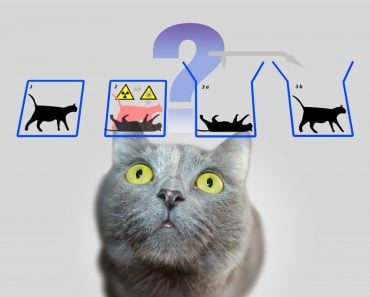

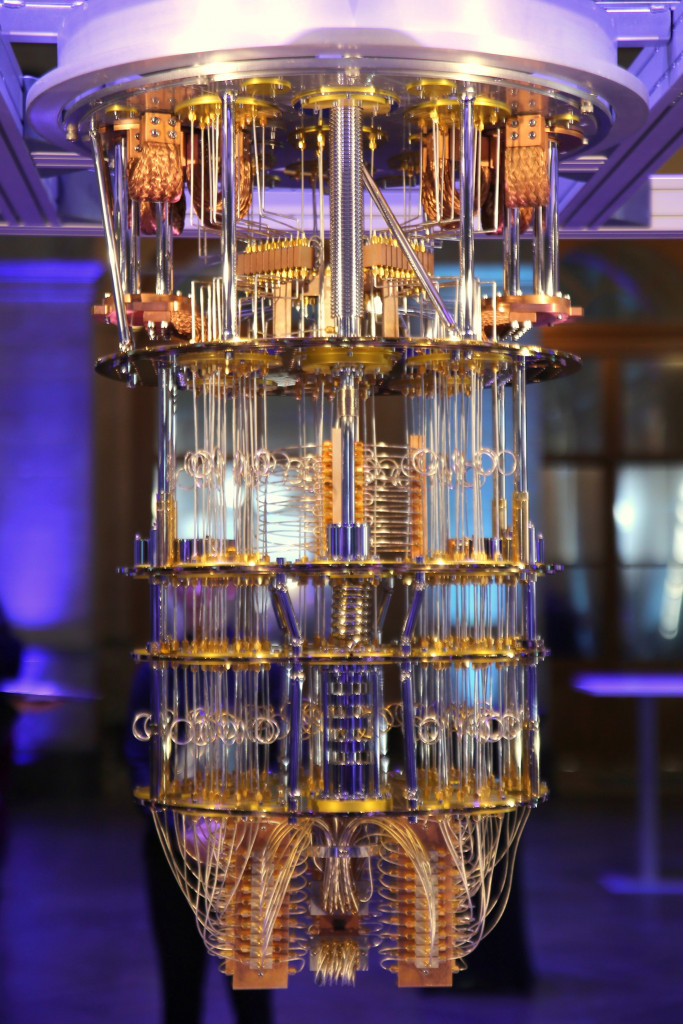

Quantum computing, which is gaining immense popularity every day, does away with the physical limitations of classical computing, as it is based on Quantum Bits and quantum mechanical effects, such as superposition and quantum entanglement.

But again, this view of Moore’s Law eventually “failing” is highly debated. Some believe that innovations will drive Moore’s Law into the future, like monolithic scaling and system scaling.

Mono-scaling is similar to Moore’s Law scaling, with the effect of reducing the size of transistors and the voltages at which they operate, to increase performance.

System scaling refers to the incorporation of new types of processors that are heterogeneous through various technologies like chip-to-chip interconnection and packaging.

A Final Note

Moore’s Law is an interconnection between a person’s prediction and their upcoming reality—a “prophecy” that has stood the test of time!

The fast-paced modern world is experiencing rapid changes in technology from the nano-scale to the mega-scale. It will be very interesting to note how many more years Moore’s Law survives and remains relevant. Whatever happens, it will be for the betterment of humankind… hopefully!

References (click to expand)

- Moore's Law - www.umsl.edu

- Intel Technology Innovations and Breakthroughs. Intel Corporation

- Intel Breakthroughs Propel Moore's Law Beyond 2025. Intel Corporation

- Shalf, J. (2020, January 20). The future of computing beyond Moore’s Law. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences. The Royal Society.

- The Multiple Lives of Moore's Law - IEEE Spectrum. IEEE Spectrum

- Cumming, D. R. S., Furber, S. B., & Paul, D. J. (2014, March 28). Beyond Moore's law. Philosophical Transactions of the Royal Society A: Mathematical, Physical and Engineering Sciences. The Royal Society.

- (2002) View of The Lives and Death of Moore's Law | First Monday. First Monday