Table of Contents (click to expand)

The speed of light has been measured progressively more accurately since the 17th century: Galileo's hilltop lantern experiment in the 1630s; Ole Rømer's 1676 calculation from the irregular eclipses of Jupiter's moon Io; James Bradley's 1728 measurement using the aberration of starlight; Fizeau and Foucault's mid-19th-century terrestrial experiments with toothed wheels and rotating mirrors; and finally laser interferometry in the 20th century, which fixed it at exactly 299,792,458 m/s.

The first Maglev train commenced its journey in 2004 and it has continued to dazzle passengers ever since. The world’s fastest passenger train travels at an outrageous 431 km/hr, incising the surrounding air, providing the view to someone adhered to the tip of the train’s nose of rocketing through a wormhole. At this velocity, the stretch between New York and Los Angeles is covered in less than 7 hours. However, one of mankind’s most invigorating inventions is still astronomically inferior when compared to one of nature’s prodigies. Light travels at an incomprehensible speed of 299,792.458 km/s or 1,079,252,848.8 km/hr. A Maglev travels at around 0.00004% of the speed of light.

I’m not even exaggerating or using a gaudy, inflated adjective to describe its magnitude. The speed is literally incomprehensible. Our mental faculty isn’t equipped to assess objects moving SO swiftly. Still, from a suitable vantage point, calculating huge velocities is not an arduous task. The simplest way is to let the object travel a circuit of a known distance while observing the elapsed time for one lap. Divide the distance by this time and you get the distance in any unit of distance the object travels per any single unit of time. Pretty simple. This method is lucrative for measuring the velocity of rolling stones, sprinting cheetahs and Maglev trains.

However, measuring the speed of light on Earth seems impossible, so how did we end up measuring it up to three decimal places, based on which we defined the standard unit of distance – a meter — the most accurately measured physical quantity?

Recommended Video for you:

Galileo’s Lamps

Before Aristotle, “scientists” were largely empirical philosophers whose observations were confined by perception. Because light traveled from a burning torch to a stone manuscript so quickly, people naturally believed it was transmitted instantaneously, its velocity was infinite. In fact, light is so swift that there is no noticeable lag in the position of the Earth’s shadow on the moon during an eclipse! Aristotle, one of the finest logicians of all time, refuted this claim, but eventually, the debate lost its luster and the criticisms grew dormant. Until the 17th century, that is, when Galileo reprised it.

However, this time Galileo wasn’t confined by his perception, but rather by the technology of his time. Galileo undermined the velocity dramatically and tried to measure it terrestrially. He and his assistant stood on two distanced hills, each holding a bright source of light which they covered and uncovered.

Galileo asked his assistant to uncover his source of light, and upon witnessing that illuminance, Galileo did the same. Galileo sought the simplest method. He calculated the speed of light by measuring the elapsed time until he witnessed his assistant’s light, coupled with the knowledge of the distance between them. Of course, his results were “inconclusive”, a conclusion he followed with a smug remark: “if not instantaneous, it is extremely rapid.” Yeah, thanks, Galileo… who knew?

Moonwatching And Stargazing

If the transmission of light isn’t instantaneous and its photons gallop at a finite velocity, regardless of this value’s apparent exorbitance, it would take some time, no matter how trifling, to reach all the dark corners. Light’s pace can be challenged when it is supposed to travel astronomical distances. Ole Roemer, in 1676, exploited this limitation to calculate the speed of light. Although, he had no incentive to measure it. Roemer was actually fascinated by Io, one of the four innermost moons of Jupiter that vanished in Jupiter’s gigantic, overwhelming shadow during its orbit until it escaped the darkness and was illuminated by the Sun’s light.

Roemer carefully observed Io and predicted that depending on the configuration of the Sun, Earth and Jupiter, there was a difference between the predicted times of the eclipses and the actual time they were observed. Roemer reasoned that the light reaching our telescopes stretched and contracted as the Earth and Jupiter moved farther away or closer towards each other in their orbits, respectively. Time stretched because the light had to travel a slightly elongated distance. When his colleagues expressed their dubiousness in view of his findings, Roemer calmly predicted that Io’s eclipse on the 9th of November of the same year would be 10 minutes late. To their bewilderment, his prediction was spot on.

Later, using his findings, Roemer approximated the speed of light to be 214,000 km/s. Despite being about 80,000 km/s slow, his ingenuity is remarkable given that he arrived at this value nearly 350 years ago, long before any of the precision technology we have today. In fact, his approximation deviated from the true value because his calculations relied on planetary distances that were believed to be true back then. The distances provided by his predecessors were inaccurate, and using his numbers with the correct distances now, you guessed it, results in closely approximating the speed of light. Genius.

Still 80,000 km/s short, astronomers continued to drudge to narrow down the error to a minimum. In 1728, James Bradley made estimated speed of light by observing an aberration. An aberration is a tiny change in the position of a star or any heavenly body by the connivance of the motion of light and the observer. James observed a star in Draco, a constellation of the northern sky, and found that its apparent position changed throughout the year as Earth revolved around the Sun. He used the angle of deviation of the starlight, along with Earth’s velocity around the Sun, and calculated the speed of light to be 301,000 km/s. Yes, that’s wrong, but again, we must appreciate how close James came despite the lack of technology. Even so, the number isn’t close enough.

Closing In

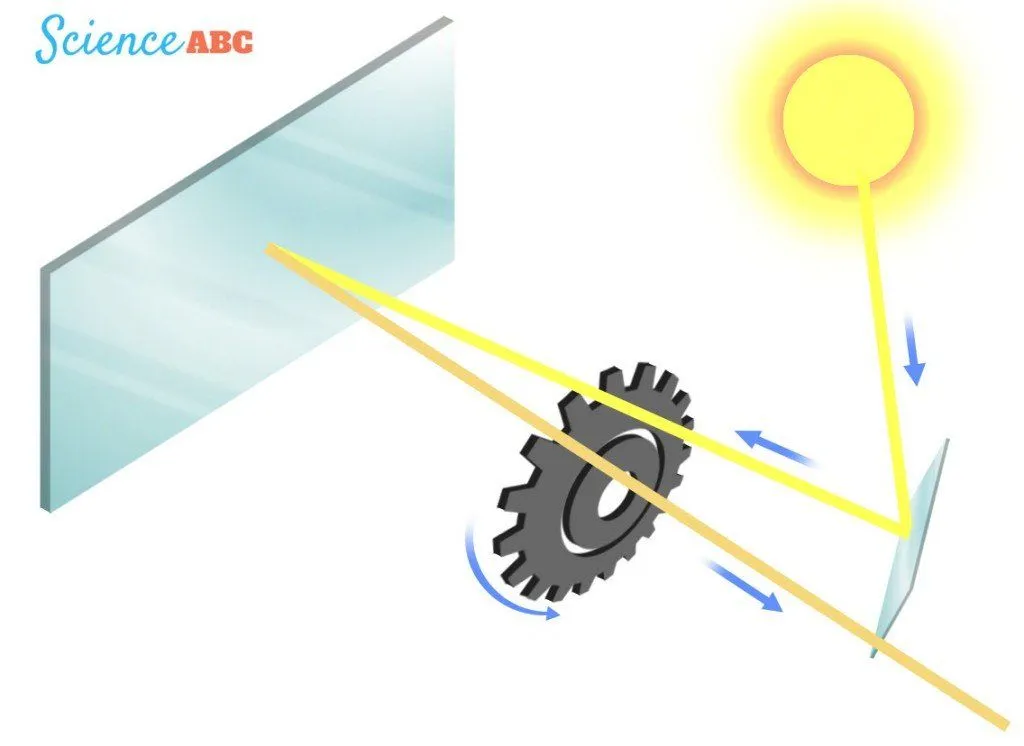

A century later, in 1849, Armand Fizeau set out to measure the speed of light. Fizeau was the first person who would measure it terrestrially, disregarding the terrifying solitary isolation of the heavens. Fizeau’s apparatus included a source of light, a reflecting mirror and a wheel through which the beam of light traversed. The mirror was placed a certain distance away from the source of light, with the wheel rotating between them. The beam of light traversed the wheel twice – first while traveling towards the mirror and second while returning back reflected.

Initially, the light went through the wheel’s teeth randomly, however, gradually, Fizeau calibrated the rate of rotation such that the light went through a gap during its departure and through the consecutive gap during its arrival. With the knowledge of the distance between the mirror and the light’s source and the wheel’s rate of rotation, Fizeau calculated the speed of light to be around 315,000 km/s. However, Leon Foucault used an improved apparatus that comprised rotating mirrors and dragged down the value to 298,000 km/s. Still an inch or two behind.

After Maxwell bequeathed us with the laws of electromagnetism, the speed of light ‘c’, could be obtained by the reciprocal of the square root of the product of the magnetic permeability and electric permittivity of free space. In 1907, Rosa and Dorsey calculated this to be 299,788 km/s, the most accurate value at that time. Later, as the technology caught up with our timeless, unabated curiosity, Froome in 1958, with the aid of laser interferometers, calculated the speed of light to be 299,792.5 km/s. This was nail-bitingly close to the true value!

Subsequently, scientists realized that the present and future measurements based on highly sophisticated atomic standards weren’t constrained by technology, but rather due to uncertainties in their definition of the units itself. The vague definition of a meter threatened the accuracy of experiments for posterity. Even though the value of the speed of light was known within an error of 1 m/s, scientists found it obligatory to get rid of every chink in the armor, and in doing so, construct an infallible definition of a meter.

The National Bureau of Standards in Boulder Colorado used helium-neon lasers and meticulously accurate cesium clocks to measure the speed of light. They defined the meter as the distance light traveled in vacuum for 1/299,792,458 of a second, such that the speed of light in a vacuum is *drum roll* 299,792,458 m/s or 299,792.458 km/s. Not instantaneous, but yes, extremely rapid!

References (click to expand)

- How is the speed of light measured? - UCR Math. The University of California, Riverside

- CurioCity - CurioCité | How did scientists figure out the speed o - explorecuriocity.org:80

- Who first measured the speed of light? (Intermediate) - Curious .... Cornell University

- How was the speed of light determined and who found it .... physlink.com