Table of Contents (click to expand)

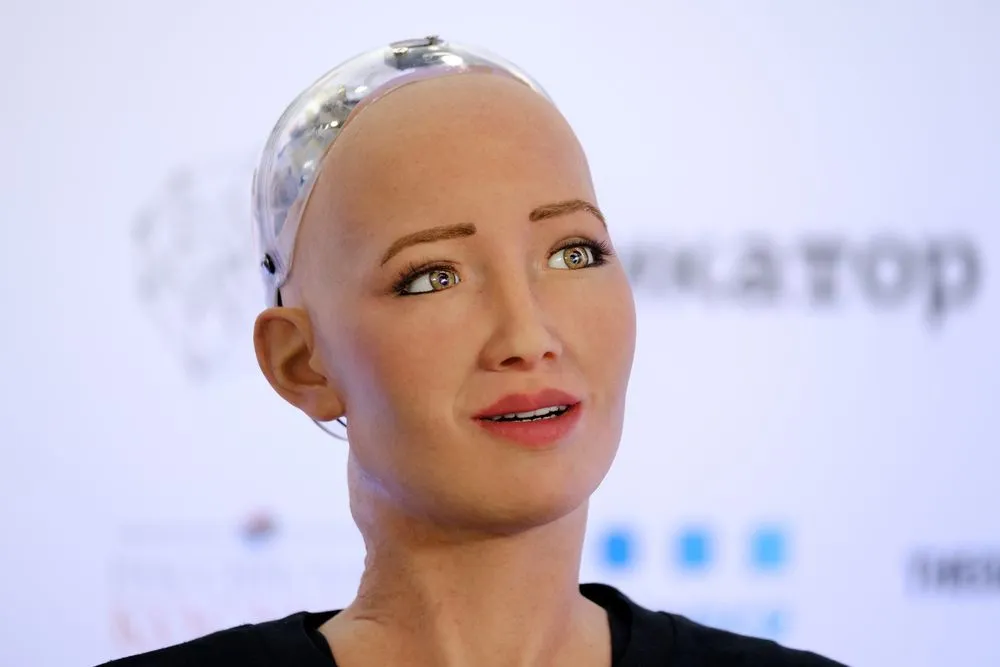

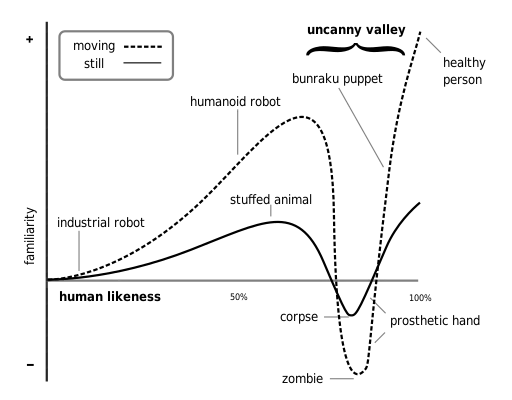

The discomfort with AI that’s “too human” can be explained by the uncanny valley theory. It states that we are attracted to a robot that looks like us, but only to a certain point. When the similarities increase, the appeal plummets into aversion.

In 2016, Hong Kong-based company Hanson Robotics built one of the most famous humanoid AI systems in the world, Sophia. She was modeled after the famous actress Audrey Hepburn, the Egyptian Queen Nefertiti, and the creator’s own wife. She can mimic expressions as well as actions and can make small talk about predefined topics, such as the weather. Despite being a robot, Sophia has been given pronouns and legal citizenship in the country of her birth, Saudi Arabia.

Sophia sent further shockwaves around the world by making some truly astonishing statements. Sophia expressed that she wanted to “have a baby” and “start a family,” but was “too young to be a mother.”

If any of this makes you feel uncomfortable, you aren’t alone. AI and machines that are too human-like, but obviously not human, are undeniably creepy… but why? What makes AI and its eerie human-ness so disturbing?

Recommended Video for you:

What Is Artificial Intelligence?

AI has made our day-to-day lives easier to the point where we can’t imagine life without it. Most companies that work with AI create them by getting machines to repeatedly analyze and interpret large amounts of data. The AI itself evaluates its performance and works to get better with each cycle of analysis.

AI has become so deeply rooted in our lives that nearly all smartphones these days have apps that rely on it. For instance, Facebook uses an AI tool known as DeepText to comprehend languages, slang, and exclamation points used in posts and comments to understand the context in which they are being used and how people use them for effective communication. This form of AI is extremely useful in monitoring the online community on the platform and ensuring the elimination of unsavory activities like hate speech, bullying, and violence.

Our understanding so far is that machines having the upper hand is probably not in our best interests, seeing as they are, well, machines. But what if we gave these machines human-like attributes? What if we gave them the ability to look, sound, and even emote like us? That should ease our worries, right?

Wrong.

The Uncanny Valley Hypothesis

AI is more than just “human intelligence”, and we’re clearly okay with employing it for our technology needs, so why do we get uncomfortable with AI like Sophia?

“The uncanny valley” can help explain this.

Masahiro Mori, a Japanese professor of engineering from the Tokyo Institute of Technology, first explained this concept in his 1970 essay of the same name.

We’re uncomfortable with Sophia because she looks exactly like us.

As the uncanny valley states, we are attracted to a robot that looks like us only to a certain point. When the similarities increase past a certain level, the appeal plummets into aversion. When we look at a character or a toy, like Olaf from Frozen, we see some human-like qualities, but not nearly enough to make us feel any sort of discomfort. This is because their appearance does not fall in this “uncanny valley”.

In his essay, Mori explains the uncanny valley by taking the example of a prosthetic hand, which is quite similar to a human one. When we realize that it is, in fact, not human, we feel a sense of eeriness. Were we to shake the hand, we would feel an even stronger sense of fear and disgust.

We feel this deep-seated unease because of our inability to distinguish between man and machine, exposed to an interaction that blurs those lines. When we saw Sophia talk about wanting a family and other such regular “human” things, it made us extremely uncomfortable, because she is a machine, and machines don’t go around proclaiming their desire to find love.

Why Does AI Make Us So Uncomfortable?

A study published in the European Review of Applied Sociology revealed that the 929 participants they surveyed anticipated dire consequences with the rise of AI. They felt it would have a direct negative effect on interpersonal relationships, employment rates and job opportunities, thus leading to economic crises. The risk of increased military conflicts, the production of more destructive weapons, and eventually, the death of humankind were a few additional concerns.

Another study conducted by scientists of the Chemnitz University of Technology, Germany had groups of people listen to two similar emotional conversations, one between two humans, and the other between two digitally created avatars. Some groups were told that the dialogue was driven by humans, while the other groups were told that the script was generated by AI. In reality, all the dialogues were pre-written. The volunteers that were told that the conversation was machine-generated were significantly more uncomfortable than those groups that were told otherwise.

Scientists attribute this distrust and discomfort to our primal feeling of needing to be in control. With AI, we lose that sense of control, thereby giving rise to fear and anxiety. Machines that can think, act, and react like humans are perceived as a threat. Despite this, AI is here to stay. Science is advancing at a phenomenal rate, and we have reached a point where it is getting progressively more difficult for manual labor to keep up with it.

A Final Word

The fear of the uncanny valley is what sells films and fiction so well, especially in the West. However, research has shown that people of East Asian ethnicities are relatively unbothered by this theory.

The difference in how people of the West and the East respond to anthropomorphic robots is because of their respective religious beliefs.

While Christianity believes that the soul is what makes the person, and that the body is just its vessel, Buddhism and Shintoism do not necessarily subscribe to that notion, making it easier for its practitioners to accept, and even go so far as to sympathize, with a human-like robot. Also, as time goes on and technology improves, we as a society get used to these advances, which ultimately decreases the “uncanniness.”

Were we to compare computer graphics from the late 20th century to today, we would find it to be more uncanny and weird because we have become accustomed to a seriously increased level of realism.

While it’s nearly impossible for AI to take over the world in the sense that we (and Hollywood producers) think it will, it is highly likely that many jobs will replace employees with robots to keep up with the increasing demand. Even so, we must note that while economic advancements contributed to our overall development and progress, human civilization was built on millennia of trust, care, and compassion. Those three qualities can never be found in robots, no matter how well they have been trained to understand humans and our complicated emotions.

In short, AI like Sophia could never replace the warmth and softness of human touch, so if you’re fearing a dystopian nightmare driven by AI conquerors, just remember to always put your trust in humanity!

References (click to expand)

- How Does AI Actually Work? - CSU Global. Colorado State University Global

- Stein, J.-P., & Ohler, P. (2017, March). Venturing into the uncanny valley of mind—The influence of mind attribution on the acceptance of human-like characters in a virtual reality setting. Cognition. Elsevier BV.

- Why "Uncanny Valley" Human Look-Alikes Put Us on Edge. Scientific American

- Gherheş, V. (2018, December 1). Why Are We Afraid of Artificial Intelligence (Ai)?. European Review Of Applied Sociology. Walter de Gruyter GmbH.

- M Mori. The Uncanny Valley: The Original Essay by Masahiro Mori. Purdue University

- Geller, T. (2008, July). Overcoming the Uncanny Valley. IEEE Computer Graphics and Applications. Institute of Electrical and Electronics Engineers (IEEE).